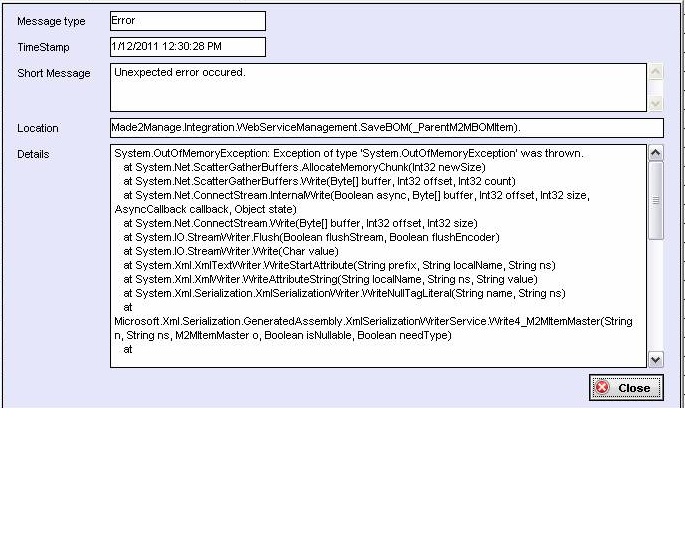

Exception due to Spark driver running out of memory; Job failure because theo. Spark jobs might fail due to out of memory exceptions at the driver or executor end. SparkException: Job aborted due to stage failure: Total size of serialized. Criticalstop on Wed, 03 Sep 2014 11:41:53. Use the GetBuffer method of the MemoryStream to avoid copying the data to a new byte array before you compress it. The bytes are written using the MemoryStream's Length property due to the fact that the underlying byte array used by the MemoryStream may be larger than the amount of data it contains.

|